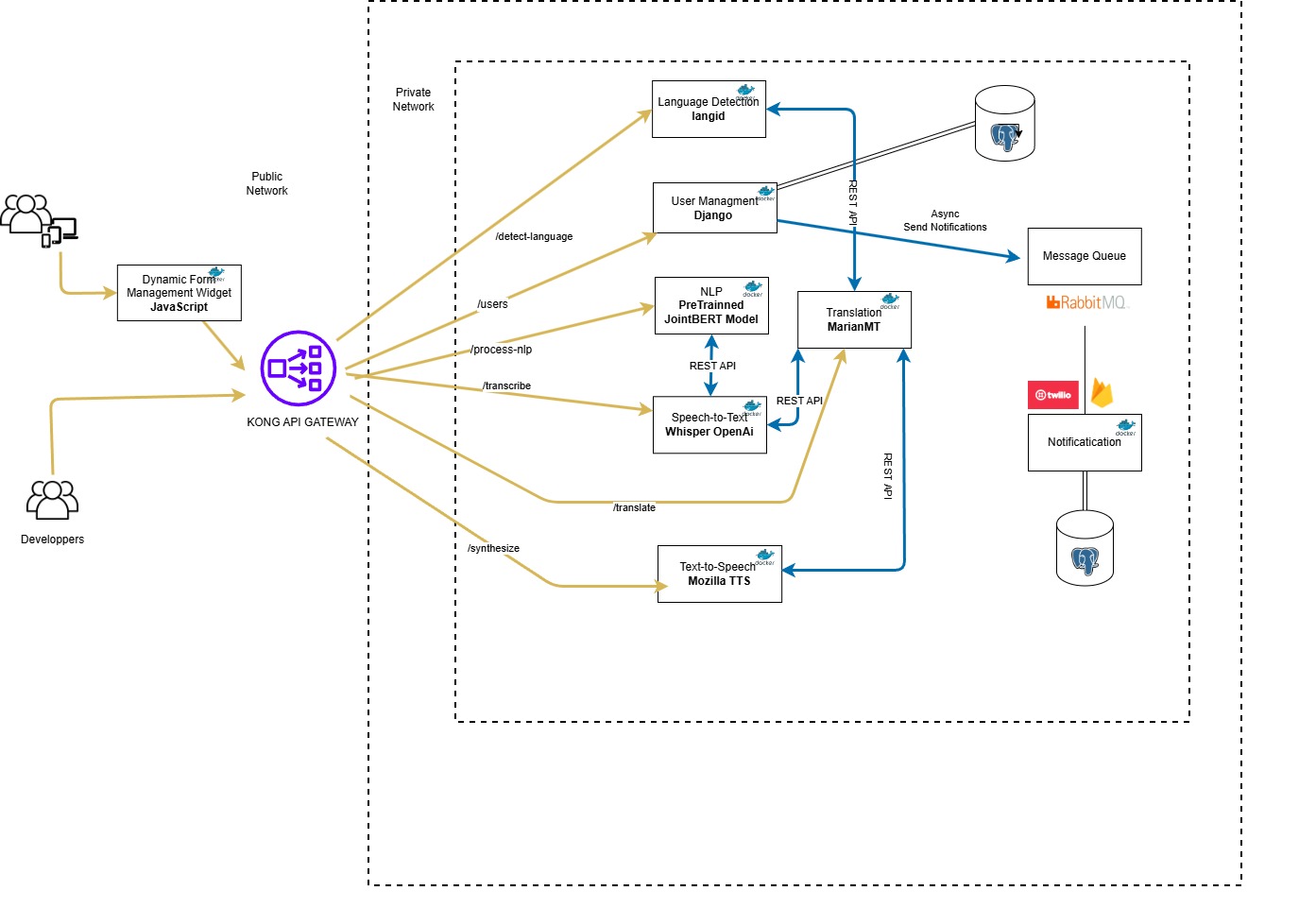

Ensuring the accessibility of web forms is crucial in today's interconnected society, particularly for individuals who are illiterate, have physical disabilities, or face language barriers. This paper aims to improve web form accessibility by presenting a novel approach that transforms traditional web forms into interactive audio formats using a microservices architecture integrated with advanced technologies. MarianMT enables seamless translation of both user inputs and form questions, while Mozilla TTS provides speech synthesis (text-to-speech), allowing users with reading difficulties to hear form questions aloud. Furthermore, Whisper speech recognition technology converts spoken responses into text. At the core of the system, a Natural Language Processing (NLP) module based on JointBERT, fine-tuned with domain-specific data, accurately detects user intent and extracts key information (slots) for form completion. Tests have demonstrated promising results, achieving 74% accuracy in intent detection and 70% accuracy in slot extraction. These findings highlight the system's potential to enhance web form accessibility, thereby creating a more seamless and inclusive user experience.

Service Project

From Textual Web Forms to Audio Web Forms: Toward a Microservices Architecture Leveraging Natural Language Processing Techniques

avril 16, 2026

161 words

65 views

Want to contribute?

Join Living Seeds Lab and share your research and insights with our community.

Contact Us to Contribute